追踪 Anthropic

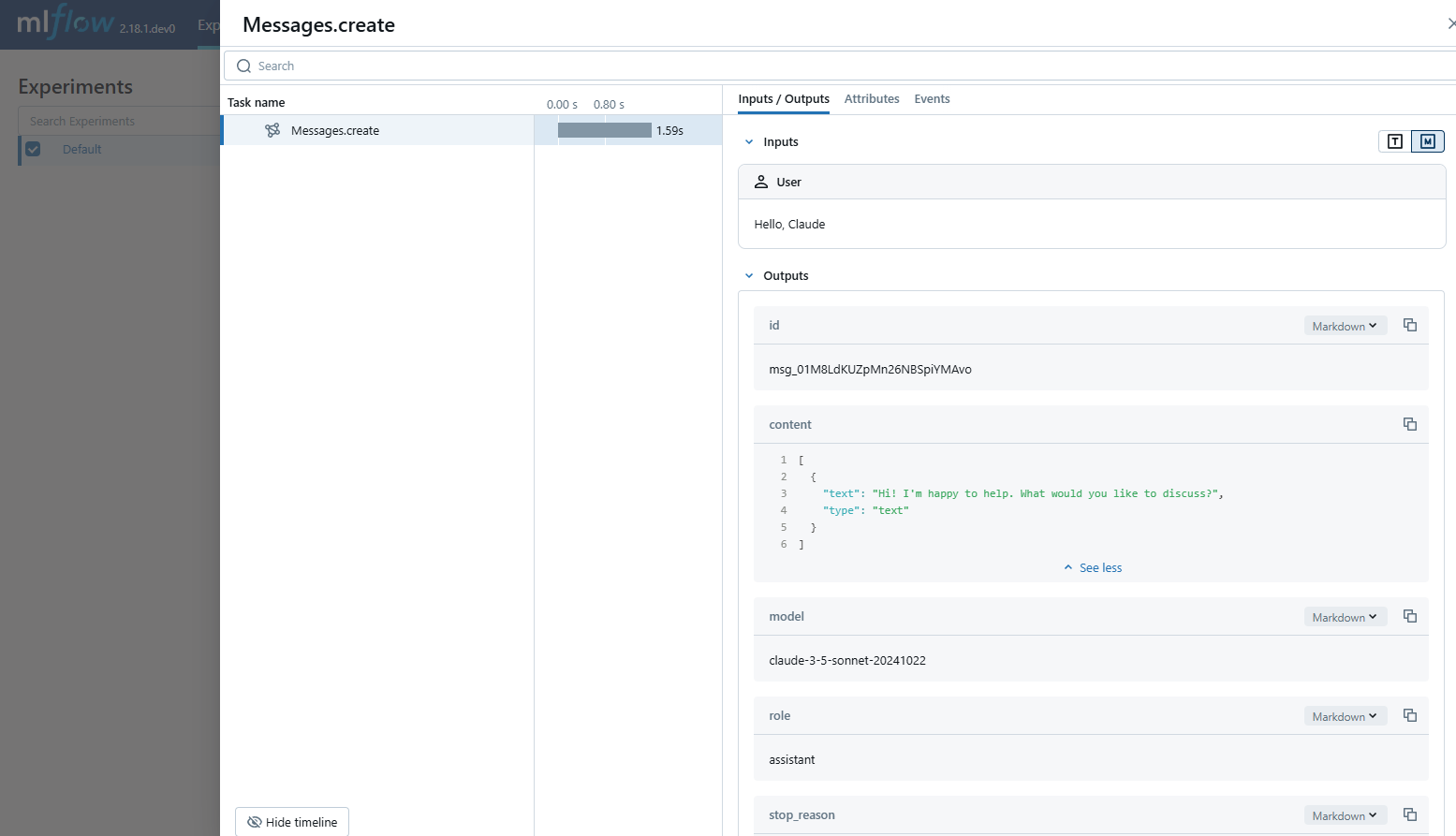

MLflow Tracing 为 Anthropic LLM 提供自动追踪功能。通过调用 mlflow.anthropic.autolog() 函数启用 Anthropic 的自动追踪后,MLflow 将捕获嵌套追踪并在调用 Anthropic Python SDK 时将其记录到当前活跃的 MLflow Experiment 中。

import mlflow

mlflow.anthropic.autolog()

MLflow 追踪自动捕获有关 Anthropic 调用的以下信息:

- 提示和完成响应

- 延迟

- 模型名称

- 其他元数据,如

temperature、max_tokens(如果指定) - 如果响应中返回函数调用

- 任何引发的异常

目前,MLflow Anthropic 集成仅支持文本交互的同步调用追踪。异步 API 不会被追踪,多模态输入的完整输入也无法记录。

支持的 API

MLflow 支持对以下 Anthropic API 进行自动追踪:

| 聊天完成 | 函数调用 | 流式传输 | 异步 | 图像 | 批处理 |

|---|---|---|---|---|---|

| ✅ | ✅ | - | ✅ (*1) | - | - |

(*1) 异步支持已在 MLflow 2.21.0 中添加。

如需支持其他 API,请在 GitHub 上提交功能请求。

基本示例

import anthropic

import mlflow

# Enable auto-tracing for Anthropic

mlflow.anthropic.autolog()

# Optional: Set a tracking URI and an experiment

mlflow.set_tracking_uri("https://:5000")

mlflow.set_experiment("Anthropic")

# Configure your API key.

client = anthropic.Anthropic(api_key=os.environ["ANTHROPIC_API_KEY"])

# Use the create method to create new message.

message = client.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=1024,

messages=[

{"role": "user", "content": "Hello, Claude"},

],

)

异步

自 MLflow 2.21.0 起,MLflow Tracing 已支持 Anthropic SDK 的异步 API。其用法与同步 API 相同。

import anthropic

# Enable trace logging

mlflow.anthropic.autolog()

client = anthropic.AsyncAnthropic()

response = await client.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=1024,

messages=[

{"role": "user", "content": "Hello, Claude"},

],

)

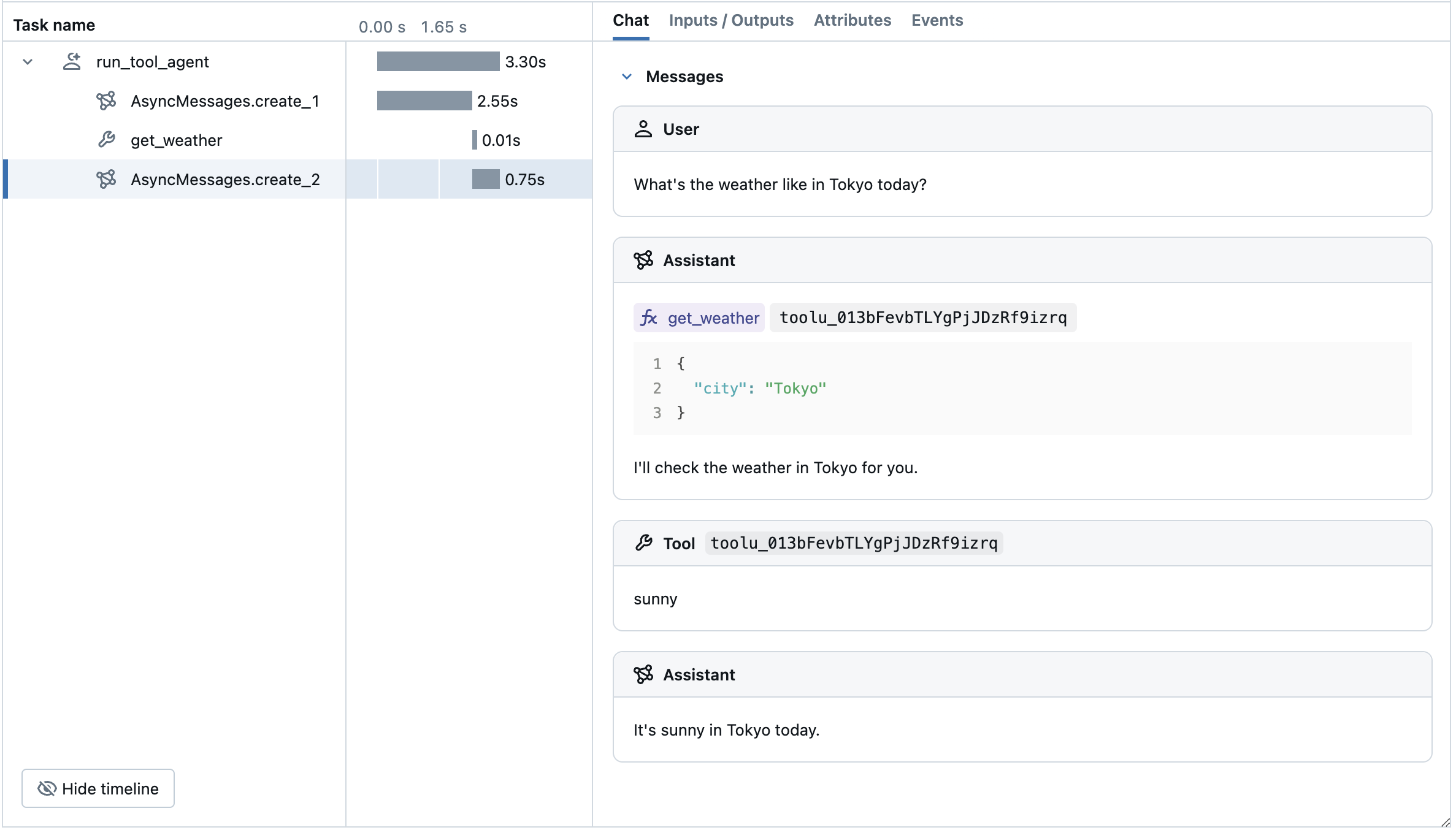

高级示例:工具调用代理

MLflow Tracing 自动捕获来自 Anthropic 模型的工具调用响应。响应中的函数指令将在追踪 UI 中高亮显示。此外,您可以使用 @mlflow.trace 装饰器来标注工具函数,为工具执行创建一个 span。

以下示例展示了如何使用 Anthropic 工具调用和 MLflow Tracing for Anthropic 实现一个简单的函数调用代理。该示例进一步使用了异步 Anthropic SDK,以便代理可以处理并发调用而不阻塞。

import json

import anthropic

import mlflow

import asyncio

from mlflow.entities import SpanType

client = anthropic.AsyncAnthropic()

model_name = "claude-3-5-sonnet-20241022"

# Define the tool function. Decorate it with `@mlflow.trace` to create a span for its execution.

@mlflow.trace(span_type=SpanType.TOOL)

async def get_weather(city: str) -> str:

if city == "Tokyo":

return "sunny"

elif city == "Paris":

return "rainy"

return "unknown"

tools = [

{

"name": "get_weather",

"description": "Returns the weather condition of a given city.",

"input_schema": {

"type": "object",

"properties": {"city": {"type": "string"}},

"required": ["city"],

},

}

]

_tool_functions = {"get_weather": get_weather}

# Define a simple tool calling agent

@mlflow.trace(span_type=SpanType.AGENT)

async def run_tool_agent(question: str):

messages = [{"role": "user", "content": question}]

# Invoke the model with the given question and available tools

ai_msg = await client.messages.create(

model=model_name,

messages=messages,

tools=tools,

max_tokens=2048,

)

messages.append({"role": "assistant", "content": ai_msg.content})

# If the model requests tool call(s), invoke the function with the specified arguments

tool_calls = [c for c in ai_msg.content if c.type == "tool_use"]

for tool_call in tool_calls:

if tool_func := _tool_functions.get(tool_call.name):

tool_result = await tool_func(**tool_call.input)

else:

raise RuntimeError("An invalid tool is returned from the assistant!")

messages.append(

{

"role": "user",

"content": [

{

"type": "tool_result",

"tool_use_id": tool_call.id,

"content": tool_result,

}

],

}

)

# Send the tool results to the model and get a new response

response = await client.messages.create(

model=model_name,

messages=messages,

max_tokens=2048,

)

return response.content[-1].text

# Run the tool calling agent

cities = ["Tokyo", "Paris", "Sydney"]

questions = [f"What's the weather like in {city} today?" for city in cities]

answers = await asyncio.gather(*(run_tool_agent(q) for q in questions))

for city, answer in zip(cities, answers):

print(f"{city}: {answer}")

禁用自动追踪

通过调用 mlflow.anthropic.autolog(disable=True) 或 mlflow.autolog(disable=True) 可以全局禁用 Anthropic 的自动追踪。