跟踪 OpenAI

MLflow 跟踪 为 OpenAI 提供了自动跟踪功能。通过调用 mlflow.openai.autolog() 函数启用 OpenAI 的自动跟踪,MLflow 将捕获 LLM 调用轨迹并将其记录到当前活跃的 MLflow 实验中。

import mlflow

mlflow.openai.autolog()

MLflow 跟踪自动捕获关于 OpenAI 调用的以下信息

- 提示和完成响应

- 延迟

- 模型名称

- 附加元数据,例如

temperature,max_tokens, 如果指定。 - 如果在响应中返回的函数调用

- 内置工具,例如网络搜索、文件搜索、计算机使用等。

- 如果引发的任何异常

MLflow OpenAI 集成不仅限于跟踪。MLflow 为 OpenAI 提供了完整的跟踪体验,包括模型跟踪、提示管理和评估。请查看 MLflow OpenAI Flavor 以了解更多信息!

支持的 API

MLflow 支持对以下 OpenAI API 进行自动跟踪。要请求对其他 API 的支持,请在 GitHub 上提交 功能请求。

聊天补全 API

| 普通 | 函数调用 | 结构化输出 | 流式传输 | 异步 | 图像 | 音频 |

|---|---|---|---|---|---|---|

| ✅ | ✅ | ✅(>=2.21.0) | ✅ (>=2.15.0) | ✅(>=2.21.0) | - | - |

响应 API

| 普通 | 函数调用 | 结构化输出 | 网络搜索 | 文件搜索 | 计算机使用 | 推理 | 流式传输 | 异步 | 图像 |

|---|---|---|---|---|---|---|---|---|---|

| ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | - |

响应 API 从 MLflow 2.22.0 开始支持。

代理 SDK

参见 OpenAI 代理 SDK 跟踪 获取更多详细信息。

嵌入 API

| 普通 | 异步 |

|---|---|

| ✅ | ✅ |

基本示例

- 聊天补全 API

- 响应 API

import openai

import mlflow

# Enable auto-tracing for OpenAI

mlflow.openai.autolog()

# Optional: Set a tracking URI and an experiment

mlflow.set_tracking_uri("https://:5000")

mlflow.set_experiment("OpenAI")

openai_client = openai.OpenAI()

messages = [

{

"role": "user",

"content": "What is the capital of France?",

}

]

response = openai_client.chat.completions.create(

model="gpt-4o-mini",

messages=messages,

temperature=0.1,

max_tokens=100,

)

import openai

import mlflow

# Enable auto-tracing for OpenAI

mlflow.openai.autolog()

# Optional: Set a tracking URI and an experiment

mlflow.set_tracking_uri("https://:5000")

mlflow.set_experiment("OpenAI")

openai_client = openai.OpenAI()

response = client.responses.create(

model="gpt-4o-mini", input="What is the capital of France?"

)

流式传输

MLflow 跟踪支持 OpenAI SDK 的流式传输 API。通过相同的自动跟踪设置,MLflow 自动跟踪流式传输响应并在跨度 UI 中渲染连接后的输出。响应流中的实际块也可在 Event 选项卡中找到。

- 聊天补全 API

- 响应 API

import openai

import mlflow

# Enable trace logging

mlflow.openai.autolog()

client = openai.OpenAI()

stream = client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{"role": "user", "content": "How fast would a glass of water freeze on Titan?"}

],

stream=True, # Enable streaming response

)

for chunk in stream:

print(chunk.choices[0].delta.content or "", end="")

import openai

import mlflow

# Enable trace logging

mlflow.openai.autolog()

client = openai.OpenAI()

stream = client.responses.create(

model="gpt-4o-mini",

input="How fast would a glass of water freeze on Titan?",

stream=True, # Enable streaming response

)

for event in stream:

print(event)

异步

MLflow 跟踪从 MLflow 2.21.0 版本开始支持 OpenAI SDK 的异步 API。用法与同步 API 相同。

- 聊天补全 API

- 响应 API

import openai

# Enable trace logging

mlflow.openai.autolog()

client = openai.AsyncOpenAI()

response = await client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{"role": "user", "content": "How fast would a glass of water freeze on Titan?"}

],

# Async streaming is also supported

# stream=True

)

import openai

# Enable trace logging

mlflow.openai.autolog()

client = openai.AsyncOpenAI()

response = await client.responses.create(

model="gpt-4o-mini", input="How fast would a glass of water freeze on Titan?"

)

函数调用

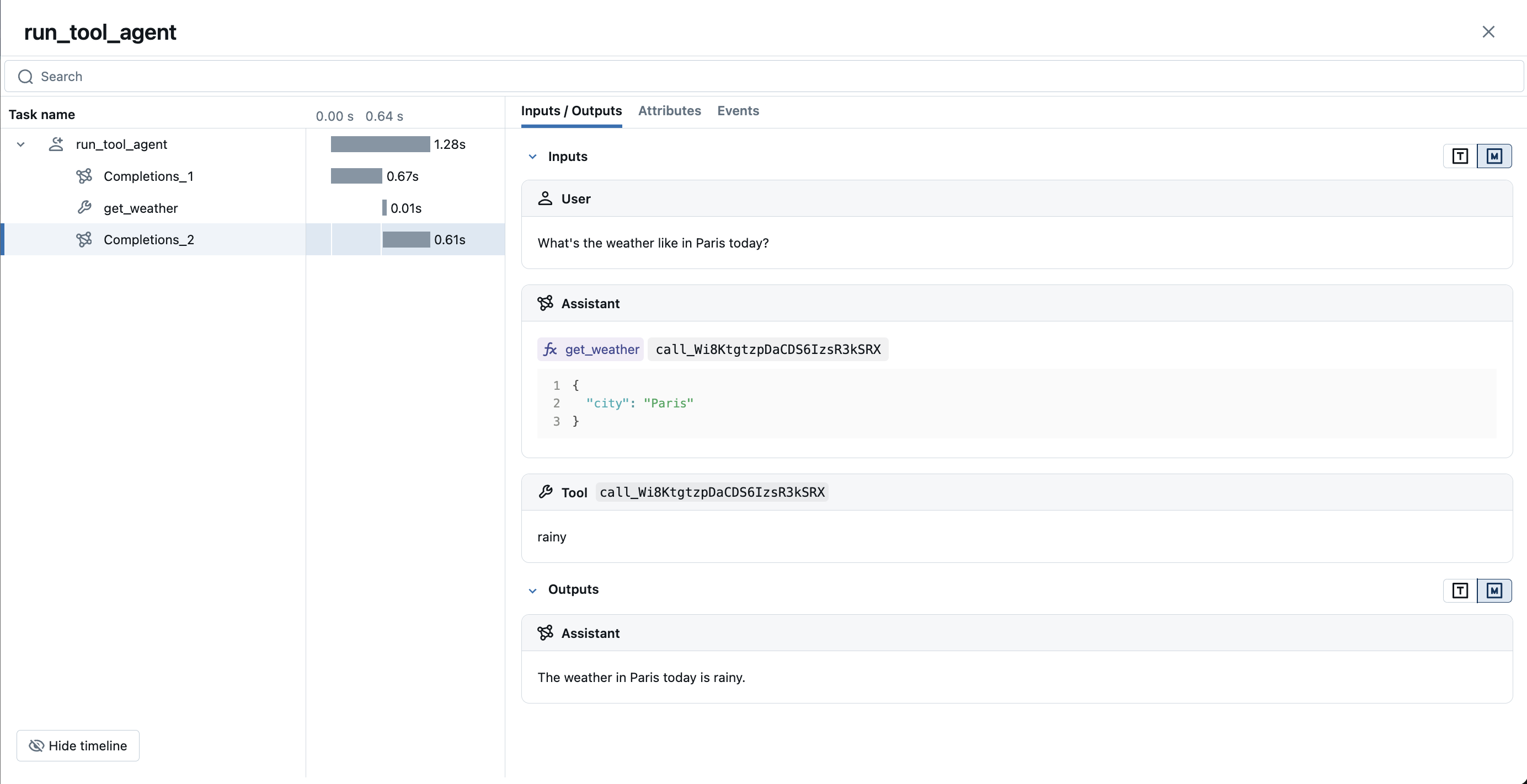

MLflow 跟踪自动捕获 OpenAI 模型返回的函数调用响应。响应中的函数指令将在跟踪 UI 中高亮显示。此外,您可以使用 @mlflow.trace 装饰器注解工具函数,以便为工具执行创建跨度。

以下示例演示了如何使用 OpenAI 函数调用和 MLflow 对 OpenAI 的跟踪来实现一个简单的函数调用代理。

- 聊天补全 API

- 响应 API

import json

from openai import OpenAI

import mlflow

from mlflow.entities import SpanType

client = OpenAI()

# Define the tool function. Decorate it with `@mlflow.trace` to create a span for its execution.

@mlflow.trace(span_type=SpanType.TOOL)

def get_weather(city: str) -> str:

if city == "Tokyo":

return "sunny"

elif city == "Paris":

return "rainy"

return "unknown"

tools = [

{

"type": "function",

"function": {

"name": "get_weather",

"parameters": {

"type": "object",

"properties": {"city": {"type": "string"}},

},

},

}

]

_tool_functions = {"get_weather": get_weather}

# Define a simple tool calling agent

@mlflow.trace(span_type=SpanType.AGENT)

def run_tool_agent(question: str):

messages = [{"role": "user", "content": question}]

# Invoke the model with the given question and available tools

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=messages,

tools=tools,

)

ai_msg = response.choices[0].message

messages.append(ai_msg)

# If the model request tool call(s), invoke the function with the specified arguments

if tool_calls := ai_msg.tool_calls:

for tool_call in tool_calls:

function_name = tool_call.function.name

if tool_func := _tool_functions.get(function_name):

args = json.loads(tool_call.function.arguments)

tool_result = tool_func(**args)

else:

raise RuntimeError("An invalid tool is returned from the assistant!")

messages.append(

{

"role": "tool",

"tool_call_id": tool_call.id,

"content": tool_result,

}

)

# Sent the tool results to the model and get a new response

response = client.chat.completions.create(

model="gpt-4o-mini", messages=messages

)

return response.choices[0].message.content

# Run the tool calling agent

question = "What's the weather like in Paris today?"

answer = run_tool_agent(question)

import json

import requests

from openai import OpenAI

import mlflow

from mlflow.entities import SpanType

client = OpenAI()

# Define the tool function. Decorate it with `@mlflow.trace` to create a span for its execution.

@mlflow.trace(span_type=SpanType.TOOL)

def get_weather(latitude, longitude):

response = requests.get(

f"https://api.open-meteo.com/v1/forecast?latitude={latitude}&longitude={longitude}¤t=temperature_2m,wind_speed_10m&hourly=temperature_2m,relative_humidity_2m,wind_speed_10m"

)

data = response.json()

return data["current"]["temperature_2m"]

tools = [

{

"type": "function",

"name": "get_weather",

"description": "Get current temperature for provided coordinates in celsius.",

"parameters": {

"type": "object",

"properties": {

"latitude": {"type": "number"},

"longitude": {"type": "number"},

},

"required": ["latitude", "longitude"],

"additionalProperties": False,

},

"strict": True,

}

]

# Define a simple tool calling agent

@mlflow.trace(span_type=SpanType.AGENT)

def run_tool_agent(question: str):

messages = [{"role": "user", "content": question}]

# Invoke the model with the given question and available tools

response = client.responses.create(

model="gpt-4o-mini",

input=question,

tools=tools,

)

# Invoke the function with the specified arguments

tool_call = response.output[0]

args = json.loads(tool_call.arguments)

result = get_weather(args["latitude"], args["longitude"])

# Sent the tool results to the model and get a new response

messages.append(tool_call)

messages.append(

{

"type": "function_call_output",

"call_id": tool_call.call_id,

"output": str(result),

}

)

response = client.responses.create(

model="gpt-4o-mini",

input=input_messages,

tools=tools,

)

return response.output[0].content[0].text

# Run the tool calling agent

question = "What's the weather like in Paris today?"

answer = run_tool_agent(question)

禁用自动跟踪

可以通过调用 mlflow.openai.autolog(disable=True) 或 mlflow.autolog(disable=True) 全局禁用 OpenAI 的自动跟踪。